Unit 2.3 Extracting Information from Data, Pandas (Notebook)

Data connections, trends, and correlation. Pandas is introduced as it could be valuable for PBL, data validation, as well as understanding College Board Topics.

- 2.3 extracting information from data notes

- Pandas and DataFrames

- Cleaning Data

- Extracting Info

- Create your own DataFrame

- Example of larger data set

- APIs are a Source for Writing Programs with Data

- Hacks

- My Fitness Dataset:

- 2.3 College Board Questions Explainations

- Data Compression

- Extracting Information From Data

- Q1 Challenge due to lack of unique ID

- Q2 Challenge in analyzing data from many counties

- Q3 Challemges with city data entered by users

- Q4 Determine Artist with the most concert attendees

- Q5 Information determined using dashboard metadata

- Q6 Information from student work habit survey

- Application of these questions

- Using Programs with Data

- Hack Helpers

- Machine Learning

2.3 extracting information from data notes

- People make careers of the skill of using pandas, Jupyter notebooks.

Pandas

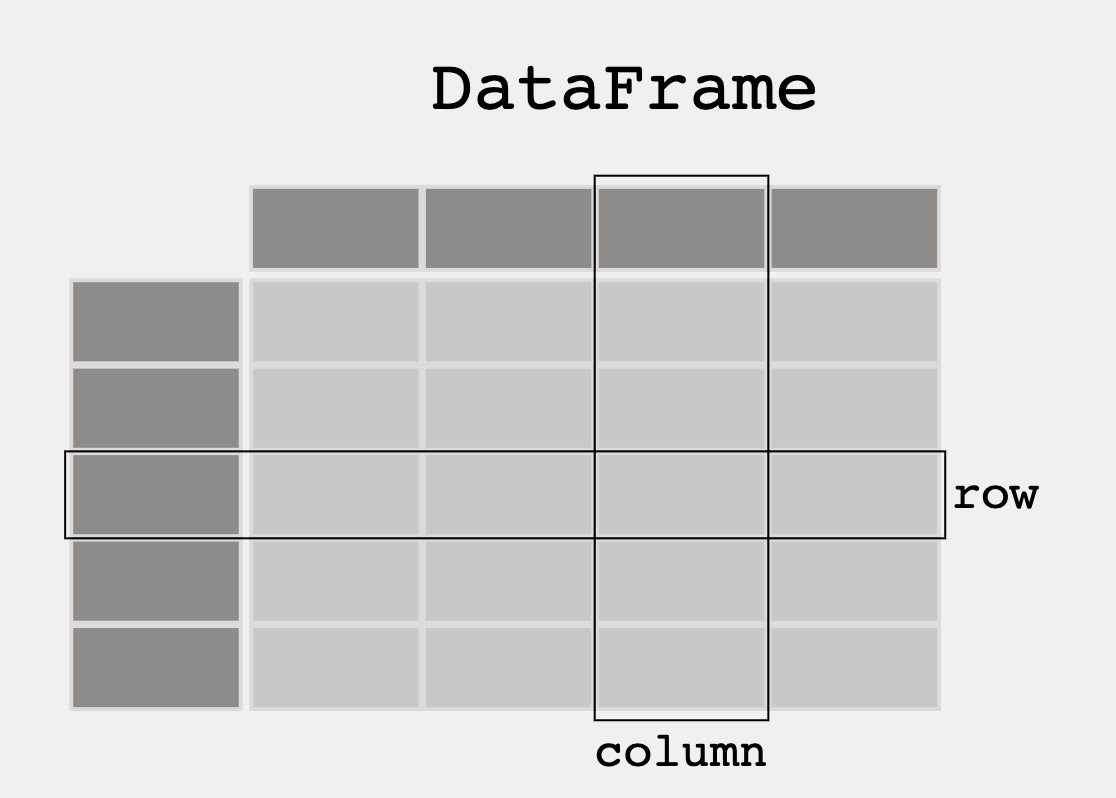

- A dataframe is table that is similar to sqlite and store data and metadata.

- Pandas allow you to work with both data and metadata

- Allows you to create your own DataFrame in Python

Cleaning Data

- Common problem in code is keeping the data that could lead to errors out.

Extracting Info

DataFrame Extract Column

- index counts the amount of datapoints in the column

- print df "" prints the datarelated to the data in the referenced dataframe.

Dataframe selection or filter

- Demorgans law: The opposite of < is > or equal to.

Head and tail

- Head is the front of the data list, Tails is the back of a data list.

APIs are a source for writing programs with data

Pandas and DataFrames

In this lesson we will be exploring data analysis using Pandas.

- College Board talks about ideas like

- Tools. "the ability to process data depends on users capabilities and their tools"

- Combining Data. "combine county data sets"

- Status on Data"determining the artist with the greatest attendance during a particular month"

- Data poses challenge. "the need to clean data", "incomplete data"

- From Pandas Overview -- When working with tabular data, such as data stored in spreadsheets or databases, pandas is the right tool for you. pandas will help you to explore, clean, and process your data. In pandas, a data table is called a DataFrame.

'''Pandas is used to gather data sets through its DataFrames implementation'''

import pandas as pd

df = pd.read_json('files/grade.json')

print(df)

# What part of the data set needs to be cleaned?

# From PBL learning, what is a good time to clean data? Hint, remember Garbage in, Garbage out?

print(df[['GPA']])

print()

#try two columns and remove the index from print statement

print(df[['Student ID','GPA']].to_string(index=False))

print(df.sort_values(by=['GPA']))

print()

#sort the values in reverse order

print(df.sort_values(by=['GPA'], ascending=False))

print(df[df.GPA > 3.00])

print(df[df.GPA == df.GPA.max()])

print()

print(df[df.GPA == df.GPA.min()])

import pandas as pd

#the data can be stored as a python dictionary

dict = {

"calories": [420, 380, 390],

"duration": [50, 40, 45]

}

#stores the data in a data frame

print("-------------Dict_to_DF------------------")

df = pd.DataFrame(dict)

print(df)

print("----------Dict_to_DF_labels--------------")

#or with the index argument, you can label rows.

df = pd.DataFrame(dict, index = ["day1", "day2", "day3"])

print(df)

print("-------Examine Selected Rows---------")

#use a list for multiple labels:

print(df.loc[["day1", "day3"]])

#refer to the row index:

print("--------Examine Single Row-----------")

print(df.loc["day1"])

print(df.info())

import pandas as pd

#read csv and sort 'Duration' largest to smallest

df = pd.read_csv('files/data.csv').sort_values(by=['Duration'], ascending=False)

print("--Duration Top 10---------")

print(df.head(10))

print("--Duration Bottom 10------")

print(df.tail(10))

'''Pandas can be used to analyze data'''

import pandas as pd

import requests

def fetch():

'''Obtain data from an endpoint'''

url = "https://flask.nighthawkcodingsociety.com/api/covid/"

fetch = requests.get(url)

json = fetch.json()

# filter data for requirement

df = pd.DataFrame(json['countries_stat']) # filter endpoint for country stats

print(df.loc[0:5, 'country_name':'deaths']) # show row 0 through 5 and columns country_name through deaths

fetch()

Hacks

Early Seed award

- Add this Blog to you own Blogging site.

- Have all lecture files saved to your files directory before Tech Talk starts. Have data.csv open in vscode. Don't tell anyone. Show to Teacher.

AP Prep

- Add this Blog to you own Blogging site. In the Blog add notes and observations on each code cell.

- In blog add College Board practice problems for 2.3.

The next 4 weeks, Teachers want you to improve your understanding of data. Look at the blog and others on Unit 2. Your intention is to find some things to differentiate your individual College Board project.

- Create or Find your own dataset. The suggestion is to use a JSON file, integrating with your PBL project would be Fambulous.

When choosing a data set, think about the following:- Does it have a good sample size? - Is there bias in the data?

- Does the data set need to be cleaned?

- What is the purpose of the data set?

- ...

- Continue this Blog using Pandas extract info from that dataset (ex. max, min, mean, median, mode, etc.)

import pandas as pd

# Create a fitness dataset

data = {

'Name': ['Liav', 'Safin', 'Adi', 'Arnav', 'Taiyo'],

'Age': [25, 32, 47, 19, 56],

'Weight': [75.5, 68.2, 81.7, 61.8, 92.3],

'Height': [180, 165, 175, 160, 190],

'BMI': [23.3, 25.0, 26.6, 24.1, 25.5]

}

df = pd.DataFrame(data)

# Display the dataset

print(df)

print('Etracker User Data:')

# Calculate some basic statistics

print('Age:')

age_stats = {'Max': df['Age'].max(),

'Min': df['Age'].min(),

'Mean': df['Age'].mean(),

'Median': df['Age'].median(),

'Mode': df['Age'].mode()[0]}

for stat, value in age_stats.items():

print(f'User {stat} age: {value}')

print('Weight:')

weight_stats = {'Max': df['Weight'].max(),

'Min': df['Weight'].min(),

'Mean': df['Weight'].mean(),

'Median': df['Weight'].median(),

'Mode': df['Weight'].mode()[0]}

for stat, value in weight_stats.items():

print(f'User {stat} Weight: {value}')

print('Height:')

height_stats = {'Max': df['Height'].max(),

'Min': df['Height'].min(),

'Mean': df['Height'].mean(),

'Median': df['Height'].median(),

'Mode': df['Height'].mode()[0]}

for stat, value in height_stats.items():

print(f'User {stat} Height: {value}')

print('BMI:')

bmi_stats = {'Max': df['BMI'].max(),

'Min': df['BMI'].min(),

'Mean': df['BMI'].mean(),

'Median': df['BMI'].median(),

'Mode': df['BMI'].mode()[0]}

for stat, value in height_stats.items():

print(f'User {stat} BMI: {value}')

2.3 College Board Questions Explainations

Data Compression

Q1 Advantage of lossless over lossy compression

- The question asks us to determine what an advantage of lossless compressed algorithm over a lossy one.

- Of course the only option that made sense was a lossless compression can guarentee reconsturction of original data, while lossy cannot. This is the obvious choice because this is the main difference between lossy and lossless that we learned, so this must be the correct answer

Q2 Compression algorithm for storing a data file

- The question gives us a situation of a user who wants to save a datafile on an online storage. They want to reduce the size of the file and they ask us which type would be the best in the case that they want to be able to restore the file to its original version

- Of course the only right iption would be using a lossless compression, because being able to recover the file is the main purpose of using it.

Q3 True Statement about compression

- The question gives us a situation of a software developer for a social media platform. They want us to suggest whihch type of image compression they should use for their site.

- They should most likely use lossy compression since it provices less transmission time, as the information is not necessarily lost when this type is used.

Application of these questions

- The main purpose of these questions were so that we were able to differentiate lossy and lossless image compression. I now am very familiar with their definitions.

- I can apply this to my daily life, because I use social media every day, so I am now more familiar with the type of image compression that these social media companies most likely use.

Extracting Information From Data

Q1 Challenge due to lack of unique ID

- This question gives a situation about a reasearcher that is analyzing data about some students about a rrelationshiip between gpa and absences. They have access to a database, but it only takes into account the name of the students.

- THe progblem askes us why is it bad to not have unique IDs for each student, and this is most defniately because you could possibly confuse two students if they have the same name./

Q2 Challenge in analyzing data from many counties

- Some researches want to analyze pollution in 3000 US counties but they ask what a possible problem with this could be.

- THe only correct option that would come to mind is that different counteies morganize data in different ways. This has to be true because the researchers cannot expect everyone to organize their data in the same way so it would be very hard to combine all their files into one spot and analyze them.

Q3 Challemges with city data entered by users

- The question asks us for possible problems with a program where users input a city name and info about it is outputted

- The obvious problems that come to mind are that people might mispell or even enter abbreviations of the city, so the program would not take into account possible erros of the user.

Q4 Determine Artist with the most concert attendees

- The program has the purpsose of finding the greatest attneance of a few concerts ina month, and asks what type of data they should add besides date, revenue and artist.

- The food and drinks, length of show, and start of show have absolutely nothing to do with the amount of tickets sold, and average ticket price would make sense to be able to count how mant people went since we already theoretically know how much money was made from tickets, so using the average ticket price would easily allow us to find a rough estimate of how many people showed up.

Q5 Information determined using dashboard metadata

- image data from the view of a car driver is sstored every second and captures the cars sepepd at theat specifc data and time. The problem asks what datapoint would be found without using metadate.

- Of course this would be the bumber of bikes that passed by the car, since the rest of the options are found using averages or calculations (or metadate) based on the data given, while number of bikes is the only concrete data option.

Q6 Information from student work habit survey

- The question asks us to analyze what questions can be answered based on the responces from a survey that a teacher gave to their students. It aaks about how long hw takes, how long you study for tests, and if you enjoy those subjects

- Based on these survey questions, the teacher would definately be able to answer which subjects the students like, since thats the entire last question (I) and be able to see if students spend more time studying or doing hw (II) so those are for sure the two right answer choices.

Application of these questions

- THese questions were very efficient and benefital in insuring that we are able to understand the different ways to extract data and be able to solve various problems in the methods to do so. It also checked in on if we understand what metadata is, which will definately prepare us for collegeboard well.

- As a programmer, these questions will benefit me greatly, so I will know to always look for problems in a dataset that I am analyzing, as well as be able to understand what the outcome I want is thoroughly.

Using Programs with Data

Q1 Bookstore Spreadsheet

- The question gives us a spreadheet about some books in a bookstore where the employee wants to count the numebr of books that are a mystery, under $10 and have at least one copy in stock.

- The expression that gives us that would for sure be "(genre = "mystery") AND ((1 ≤ num) AND (cost < 10.00))" because the output is supposed to reqire all of that data so AND would be required and OR statements would result to an output of false

Q2 Clothing store ssales information

- A store owner tracks dates, payment method, number of itmes, and dollars paid for every transaction and the question asks us which statemtn is true during a 7 day period.

- The owner will of course be able to track the number of items purchased throughout that 7 week period since they can just add up the number of items purchased and matching that to the amount of transactions on each day. I don't see what this question has to do with programming is, but it was quite simple to solve.

Q3 Data files to contact customers who use batteries

- A company uses data files about their customers, products, and purchases. They want to know how to use these data files to determine who would be best fit to target in an email campaign for their rechargable batery pack.

- THe best option would be to compare the customer IDs to items purchased IDs in order to determine the profiles which would possibly need or want this specific rechargable battery pack.

Q4 Museum photograph spreadsheet

- There is a spreadsheet of inofor about pics in a museums collection with the photographer, subject, year, abd publicly available (T/F) A program wants to find which photographer took the oldest picture in the collection and the problem asks how you would do so.

- This is quite simple. The best option would be to filter by photographer and sort them by year, or vice versa. This would output the years of each photo, in order, as compared to the photographer that took the photos.

Q5 Radio Show Spreadheet

- A spreadsheet has info about the schedule for a college rado station inclding show name, gnere, day, start time, and end time. A programmer waantst o be able to count the number of shows that are both a talk show and on a Sat/Sun.

- Of course te best expressoon to output a ture statemnt for this situation would be (genre = "talk") AND ((day = "Saturday") OR (day = "Sunday")) because they must be a talk show AND either OR Sat/SUN which is filtered by the first AND statement that signifies that it requires both a talk show and on the specific date and the OR statemtn to signify either Sat or sunday.

Use of Databases to display animal information

- A preserve develops an exibit with various facts about each animal based on the selection that each guest makes. The preserve has two databases of information available to use for the exhibit. The first database contains information for each animal’s name, classification, skin type, and thermoregulation. The second database contains information for each animal’s name, lifestyle, average life span, and top speed. The problem asks how these daabases can help develop the interactive exhibit.

- It is quite obvious that both of these databases are required for the information that they want to display at this interactive exhibit, because they contain the specific data that they are looking for.

Application of these questions

- These questions are moreover less about programming specifically but they gave us a great idea on how to analyze the data, specifc outputs, and purposes of various databases, datasets, and spreadsheets.

- This will be very benefitial for our programming futures, since it gave us situations that could be very similar to those for our projects in the future, and gave us the problem solving and data analyzation skills neccessary in order to do so.

Hack Helpers

Here is how Mort started on this assignment by asking ChatGPT ... Regarding Python Pandas, what are some data sets that would be good for learning Pandas?

-

There are many data sets that are suitable for learning pandas, depending on your interests and the skills you want to develop. Here are some suggestions:- Titanic Dataset: This is a classic dataset for data analysis and machine learning, and is often used as an introduction to pandas. The dataset contains information about passengers on the Titanic, including their demographics, ticket class, and survival status.

-

Iris Dataset: This is another classic dataset that is often used in machine learning and data analysis courses. The dataset contains information about iris flowers, including their sepal length, sepal width, petal length, and petal width.

-

NBA Player Stats: This dataset contains information about NBA players, including their stats for various games, their positions, and their salaries. It is a great dataset for learning how to clean and manipulate data using pandas.

-

Housing Prices Dataset: This dataset contains information about housing prices in a particular city or region, including variables such as the number of bedrooms, square footage, and price. It is a great dataset for learning how to manipulate and analyze numerical data using pandas.

-

COVID-19 Dataset: This dataset contains information about the COVID-19 pandemic, including the number of cases, deaths, and recoveries in various countries and regions. It is a great dataset for learning how to manipulate and analyze time-series data using pandas.

-

World Bank Dataset: This dataset contains information about economic indicators from various countries, such as GDP, population, and poverty rates. It is a great dataset for learning how to manipulate and analyze large datasets using pandas.

-

-

These are just a few examples of the many datasets that are suitable for learning pandas. The best dataset for you will depend on your interests and the specific skills you want to develop.

Follow up question, "where can I find Titanic data set?"

-

The Titanic dataset is a popular dataset for data analysis and machine learning, and it can be found on various websites and data repositories. Here are a few places where you can find the Titanic dataset:- Kaggle: The Titanic dataset is available on Kaggle, which is a popular platform for data scientists and machine learning enthusiasts. You can download the dataset from the Kaggle website after creating an account.

-

UCI Machine Learning Repository: The Titanic dataset is also available on the UCI Machine Learning Repository, which is a collection of datasets that are commonly used for machine learning research. You can download the dataset from the UCI Machine Learning Repository website.

-

Seaborn library: If you have the Seaborn library installed in your Python environment, you can load the Titanic dataset directly from the library using the following code:

import seaborn as sns titanic_data = sns.load_dataset('titanic')

-

import seaborn as sns

# Load the titanic dataset

titanic_data = sns.load_dataset('titanic')

print("Titanic Data")

print(titanic_data.columns) # titanic data set

print(titanic_data[['survived','pclass', 'sex', 'age', 'sibsp', 'parch', 'class', 'fare', 'embark_town']]) # look at selected columns

Use Pandas to clean the data. Most analysis, like Machine Learning or even Pandas in general like data to be in standardized format. This is called 'Training' or 'Cleaning' data.

# Preprocess the data

from sklearn.preprocessing import OneHotEncoder

td = titanic_data

td.drop(['alive', 'who', 'adult_male', 'class', 'embark_town', 'deck'], axis=1, inplace=True)

td.dropna(inplace=True)

td['sex'] = td['sex'].apply(lambda x: 1 if x == 'male' else 0)

td['alone'] = td['alone'].apply(lambda x: 1 if x == True else 0)

# Encode categorical variables

enc = OneHotEncoder(handle_unknown='ignore')

enc.fit(td[['embarked']])

onehot = enc.transform(td[['embarked']]).toarray()

cols = ['embarked_' + val for val in enc.categories_[0]]

td[cols] = pd.DataFrame(onehot)

td.drop(['embarked'], axis=1, inplace=True)

td.dropna(inplace=True)

print(td)

The result of 'Training' data is making it easier to analyze or make conclusions. In looking at the Titanic, as you clean you would probably want to make assumptions on likely chance of survival.

This would involve analyzing various factors (such as age, gender, class, etc.) that may have affected a person's chances of survival, and using that information to make predictions about whether an individual would have survived or not.

-

Data description:- Survival - Survival (0 = No; 1 = Yes). Not included in test.csv file. - Pclass - Passenger Class (1 = 1st; 2 = 2nd; 3 = 3rd)

- Name - Name

- Sex - Sex

- Age - Age

- Sibsp - Number of Siblings/Spouses Aboard

- Parch - Number of Parents/Children Aboard

- Ticket - Ticket Number

- Fare - Passenger Fare

- Cabin - Cabin

- Embarked - Port of Embarkation (C = Cherbourg; Q = Queenstown; S = Southampton)

-

Perished Mean/Average

print(titanic_data.query("survived == 0").mean())

- Survived Mean/Average

print(td.query("survived == 1").mean())

Survived Max and Min Stats

print(td.query("survived == 1").max())

print(td.query("survived == 1").min())

Machine Learning

From Tutorials Point%20is,a%20consistence%20interface%20in%20Python). Scikit-learn (Sklearn) is the most useful and robust library for machine learning in Python. It provides a selection of efficient tools for machine learning and statistical modeling including classification, regression, clustering and dimensionality reduction via a consistence interface in Python.> Description from ChatGPT. The Titanic dataset is a popular dataset for data analysis and machine learning. In the context of machine learning, accuracy refers to the percentage of correctly classified instances in a set of predictions. In this case, the testing data is a subset of the original Titanic dataset that the decision tree model has not seen during training......After training the decision tree model on the training data, we can evaluate its performance on the testing data by making predictions on the testing data and comparing them to the actual outcomes. The accuracy of the decision tree classifier on the testing data tells us how well the model generalizes to new data that it hasn't seen before......For example, if the accuracy of the decision tree classifier on the testing data is 0.8 (or 80%), this means that 80% of the predictions made by the model on the testing data were correct....Chance of survival could be done using various machine learning techniques, including decision trees, logistic regression, or support vector machines, among others.

- Code Below prepares data for further analysis and provides an Accuracy. IMO, you would insert a new passenger and predict survival. Datasets could be used on various factors like prediction if a player will hit a Home Run, or a Stock will go up or down.

- Decision Trees, prediction by a piecewise constant approximation. - Logistic Regression, the probabilities describing the possible outcomes.

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import accuracy_score

# Split arrays or matrices into random train and test subsets.

X = td.drop('survived', axis=1)

y = td['survived']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Train a decision tree classifier

dt = DecisionTreeClassifier()

dt.fit(X_train, y_train)

# Test the model

y_pred = dt.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print('DecisionTreeClassifier Accuracy:', accuracy)

# Train a logistic regression model

logreg = LogisticRegression()

logreg.fit(X_train, y_train)

# Test the model

y_pred = logreg.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print('LogisticRegression Accuracy:', accuracy)